TL;DR: To build non-invasive neural interfaces, we need to scale brain data. MEG measures the brain’s magnetic field, which passes through the skull with minimal impedance, and produces the highest quality non-invasive data available. But current MEG systems cost millions and require shielded rooms. Acoustically driven ferromagnetic resonance sensors (ADFMRs) are a chip-scale MEMS technology with enough dynamic range to operate in Earth’s ambient magnetic field without shielding. To build ADFMRs at scale, we need to solve problems in MEMS fabrication and ferromagnetic film deposition. This field is underinvested. More groups need to be working on ADFMR and adjacent materials science.

Neuralink has repeatedly shown us that neural activity is decodable outside the lab, but their interfaces require brain surgery. Even as they push on automated robotic surgeons, this limits their reach to clinical populations for the foreseeable future.

To build neural interfaces that empower everyday humans, we have to eliminate the surgical barrier. Neuroimaging model architectures are already capable, but they are starved for the volume of data that only a non-invasive, scalable platform can provide. The challenge is a trade-off between accessibility and signal quality.

When groups of neurons fire in the brain together, a couple things happen: electromagnetic fields are generated and blood flows towards the synapses over a few seconds. Every non-invasive neuroimaging method is built on measuring one of these processes.

Blood-based methods (fMRI, fNIRS) have high spatial resolution but are inherently slow & indirect, because blood takes a few seconds to move. Electrical methods (EEG) are fast but noisy. The skull is electrically resistive, smearing and attenuating the signal before it reaches the sensor. Trying to decode speech from EEG is like transcribing a conversation through a concrete wall.

Magnetoencephalography (MEG) measures the brain’s magnetic field as it passes through the skull with minimal impedance. The temporal resolution rivals invasive methods and the decoding results, even on tiny datasets, are surprisingly strong. This is the most underleveraged modality in neuroscience.

The reason MEG hasn’t scaled is hardware. Current systems require cryogenic cooling or magnetically shielded rooms and cost millions. Only about 30 exist in the US. This doc argues that MEG is the right signal to scale, and outlines a path to making it practical through ADFMR, a new chip-scale sensor.

A review of neuroimaging modalities

Capturing the complexity of neuroimaging requires balancing three competing variables: spatial detail, temporal speed, and practical scalability. Historically, researchers have had to choose between high-resolution data that requires invasive surgery (Intracortical/ECoG) or non-invasive methods that sacrifice either speed (fMRI) or precision (EEG). As the neural decoding field matures, the bottleneck becomes the quality and quantity of the input data itself.

The diagram below maps the current landscape of modalities, highlighting MEG as a unique candidate with clinical-grade resolution and scalable deployment.

Beyond fully invasive and non-invasive approaches, a number of minimally invasive solutions have gained traction that are hard to accurately plot above. Some promising work involves ultrasound + gene therapy (optogenetics) to read brain data, which uses AAV (Adeno-associated virus) to grow gas vesicles within neurons that reflect ultrasound. Gas vesicles reflect differently as neurons fire & calcium concentrations change. Ultrasound, however, requires a cranial window (a section of skull removed or thinned) to be effective. Other tech like calcium imaging or neural dust produce high-fidelity data but also sit firmly in the ‘surgery required’ camp.

Beyond fully invasive and non-invasive approaches, a number of minimally invasive solutions have gained traction that are hard to accurately plot above. Some promising work involves ultrasound + gene therapy (optogenetics) to read brain data, which uses AAV (Adeno-associated virus) to grow gas vesicles within neurons that reflect ultrasound. Gas vesicles reflect differently as neurons fire & calcium concentrations change. Ultrasound, however, requires a cranial window (a section of skull removed or thinned) to be effective. Other tech like calcium imaging or neural dust produce high-fidelity data but also sit firmly in the ‘surgery required’ camp.

The common critique of any non-invasive method is that the signal is fundamentally too poor to be useful. It’s true that non-invasive imaging can only reliably capture pyramidal cell action (~80% of all cortical neurons) and outer cortical regions. Signals decay rapidly with distance from the brain, so subcortical imaging is difficult. But, these outer regions house the ‘output layer’ of the brain, containing the high-fidelity signals required for tasks like fine motor control. An imperfect analogy can be drawn to mechanistic interpretability in LLMs: the cortex functions similarly to the final layers of a transformer’s residual stream, where representations have been largely shaped by deeper processing but are still being refined into their final, decodable form.

Of the non-invasive methods that can capture this cortical activity, MEG produces the highest fidelity signal.

MEG quality & potential

Even with limited availability, MEG decoding results are already impressive.

BrainOmni and MEG-GPT have demonstrated that joint training on MEG signals and large-scale datasets outperforms traditional task-specific models. MEG-GPT generates synthetic MEG data that preserves complex spatio-spectral properties, suggesting that the signal contains exploitable information that scales with more data.

In the speech & language decoding space, MEGFormer and Brain2Qwerty show speech and text decoding accuracies that substantially beat EEG. Character Error Rates (CER) drop from 67% with EEG to as low as 19% with MEG in top performers, nearly the threshold where auto-correct systems become viable.

On motor control, studies have demonstrated finger-level decoding and hand-gesture recognition that rival the performance of invasive methods like ECoG.

The most impressive aspect of these results is the limited amount of training data. BrainOmni, MEG-GPT, and the decoding studies mentioned above were trained on hundreds of hours of MEG data. MEG is producing state-of-the-art decoding performance on a tiny fraction of the data that drove breakthroughs in other domains. If we can scale MEG data collection by 10-100x, we can significantly boost performance.

A more comprehensive list of MEG studies with summaries can be found in the appendix.

Scaling MEG data

Ok, MEG can produce better results than other non-invasive methods. So why not just collect more data?

The brain produces a weak magnetic field on the order of femtoteslas (10e-15 T). In comparison, the Earth’s magnetic field is on the scale of μT (10e-6 T). To build a practical MEG sensor, you need to solve two problems:

- Sensitivity: The sensor has to detect perturbations at the femtotesla scale. That’s very faint.

- Ambient field compensation: The Earth’s field will saturate any sensor that isn’t either shielded from it or built with enough dynamic range to handle it. Think of a camera trying to photograph a candle next to a floodlight. You either block the floodlight or use a camera with enough dynamic range to capture both.

An MEG sensor’s effectiveness is defined by how it tackles these two problems.

The current production system is SQUID-MEG, which relies on cryogenic cooling and massive magnetically shielded rooms. Clinical SQUID systems achieve noise floors of 2-5 fT/√Hz (femtoteslas per root-hertz), a measure of the sensor’s intrinsic noise across different frequencies. At this sensitivity, SQUIDs can reliably detect small neuron populations firing together. However, SQUID systems are notoriously expensive ($1M+ each).

OPM-MEGs in the past decade removed the need for cryogenics and significantly lowered cost. OPMs are quantum sensors that use lasers + heated vapor cells to detect small changes in magnetic spin (more details below). Commercial OPMs like the QuSpin QZFM Gen-3 achieve 7-15 fT/√Hz (a bit worse than SQUID). Unfortunately, OPMs have a low dynamic range, so magnetic shielding is still required. I’ve included a discussion on scaling cheaper magnetic shielding in the appendix.

The most promising path to scalable MEG data is through acoustically driven ferromagnetic resonance (ADFMR) sensors, developed by researchers at Berkeley.

ADFMR devices are entirely solid-state and operate at room temperature. Because they are mechanically driven and voltage-controlled, they have high dynamic range, potentially solving our magnetic shielding woes.

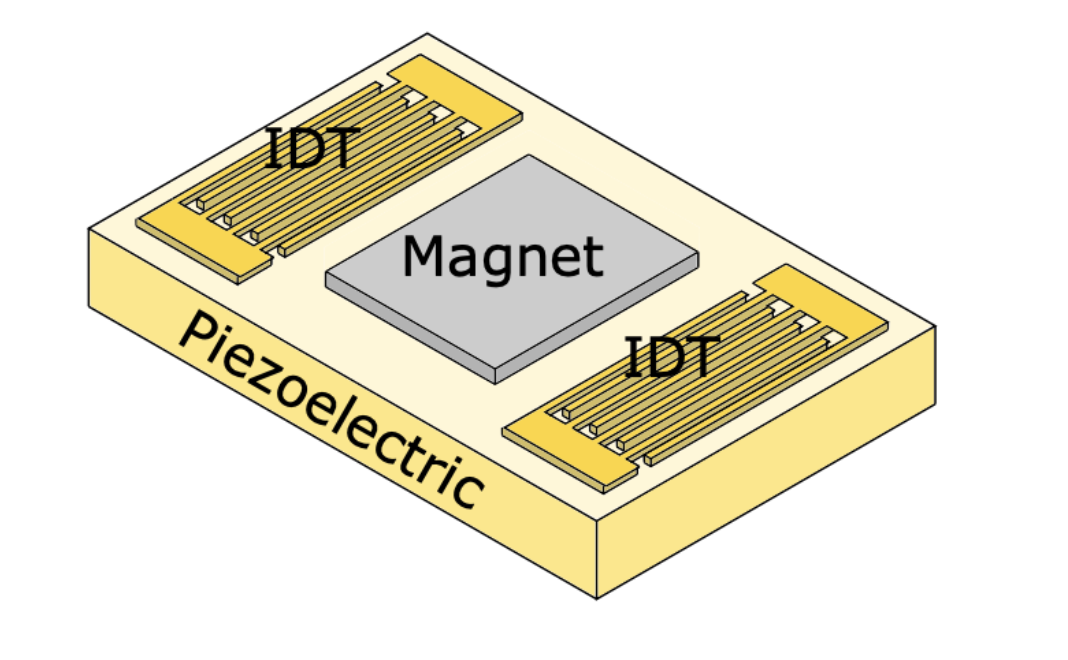

An ADFMR sensor is a micro-electromechanical system (MEMS) built on a piezoelectric substrate. It has 3 distinguishable layers:

- Input Interdigital Transducer (IDT): converts voltage into mechanical vibrations with little “stepping feet”

- Ferromagnetic film: a material containing magnetic moments that act as the main magnetic sensor

- Output IDT: converts the modulated vibrations back into electrical signal

However, current production ADFMR devices operate in the pT/√Hz range, roughly 3-4 orders of magnitude short of the 2-5 fT/√Hz sensitivity that clinical MEG requires. Closing this gap will require advances in material science like optimizing magnetoelastic coupling, improving film uniformity, and reducing film damping.

The original Berkeley thesis showed that switching from nickel to Co25Fe75 films could drop the dominant noise source (thermal magnetic noise) by 7x, which would put ADFMR in line with SQUID. This was done in a lab, so no promises on real production validity, but still a promising direction.

Another potential path involves coupling ADFMR with nitrogen vacancy (NV) centers in diamonds. NV diamonds are quantum defects that are themselves pT-level biomagnetic sensors. However, NV diamonds require microwave hardware and external magnetic fields to operate. The Berkeley thesis demonstrated that ADFMR can drive NV spin states at zero external field, suggesting a hybrid sensor could combine ADFMR’s dynamic range with NV diamond’s sensitivity. See appendix for more depth on both Co25Fe75 films and NV diamonds.

Manufacturing ADFMRs is also non-trivial. The piezoelectric substrate and IDT patterning can be built with existing MEMS foundry processes, but the ferromagnetic film process is bespoke. Most foundries don’t have processes for maintaining magnetic film stability during fabrication. The likely path is partnering with a foundry for the substrate while building specialized in-house sputtering capability for the ferromagnetic layer.

ADFMR sensitivity and manufacturing are the two highest leverage unsolved problems in the space.

Zooming out

The case for MEG holds regardless of how the broader BCI landscape develops:

- Invasive methods scale: Neuralink and others succeed at automating surgery and deploying brain implants to millions. Foundation models trained on invasive data map out neural representations with high fidelity. This is great for MEG. Those models can transfer learning onto non-invasive sensor spaces, but only if the non-invasive modality has enough signal quality to support the mapping. MEG becomes ultimately useful here.

- Other non-invasive methods scale first: EEG wins the consumer BCI market and proves demand for non-invasive brain interfaces. For focus/sleep tracking and simple interfaces, it’s good enough. But EEG’s ceiling is rooted in physics. No amount of data can unmix what arrives at the sensor already blended. Brain2Qwerty showed this: 67% CER with EEG, 19% with MEG on the same architecture. When users want real-time speech or fine motor control, MEG is the upgrade path that EEG’s success creates demand for.

Either way, we need more work on MEG interfaces, and we need cheaper ways to build them.

The researchers who developed ADFMR started Sonera, currently the only company pursuing ADFMR for biomagnetic sensing. Because of the current sensitivity gap, Sonera is starting with detecting muscle magnetic activity (MMG), which falls in the pT range and is achievable with today’s devices.

This highlights a broader problem. The ecosystem for ADFMR work is thin. There's one company and no established foundry process for the ferromagnetic films that make these sensors work. Much of the neural decoding field focuses primarily on EEG & fNIRS for scalability or fMRI for high resolution. The other large group working on MEG, focuses on OPMs & active shielding via matrix coils.

MEG is the highest-fidelity non-invasive brain signal we have. ADFMR and adjacent architectures are the only way we can make it scalable. The bottleneck is materials science. Closing the pT to fT gap will unlock the entire non-invasive BCI stack.

Appendix

FAQ

Q: The inverse problem (going from sensor-space to source-space) is fundamentally ill-posed. How can MEG ever give us reliable source localization?

Yeah, the inverse problem doesn’t have a unique solution. But source localization isn’t the bottleneck anymore. Modern decoding approaches work directly on sensor-space signals. They don’t need perfect source reconstruction. They need high SNR, good temporal resolution, and enough data to learn the mapping.

Q: Connectomics and invasive methods give us neuron-level resolution. Why should we care about cortical MEG signals?

To study circuit-level mechanisms or subcortical dynamics, invasive data is irreplaceable. For decoding motor intent, speech, visual perception, cortex-level signals already do the heavy lifting. You don’t need to see every neuron fire to decode what the system is computing. We see empirically that MEG-based speech decoders are approaching ECoG performance on tiny datasets.

Q: Foundation models are just hype. What makes you think scaling laws apply to biological signals?

The existing models suggest they do. BrainOmni trained jointly on EEG and MEG beats task-specific models. MEG-GPT generates synthetic data that preserves spatio-spectral structure. These are signs that the data has exploitable information. I’m not sure if that scales to GPT-4 levels, but I know that more high-quality brain data produces better models.

Q: EEG has orders of magnitude more data and better infrastructure. Why not double down?

EEG scaling is great and more data always helps, but there are tasks where signal quality will hit a ceiling no matter the data scale. If you’re going to invest in scaling non-invasive data, you should invest in the highest fidelity signal available.

Selected MEG studies

BrainOmni: A Brain Foundation Model for Unified EEG + MEG Signals

This paper uses a VQ-VAE tokenizer and is trained on both EEG & MEG datasets, heavily skewing towards EEG data. The resulting model outperforms SOTA task-specific models on downstream tasks, showing readers the benefit of joint training & the gains from hardening with higher-fidelity signal (MEG).

MEG-GPT: A transformer-based foundation model for MEG data

Using an adapted transformer architecture and training on a large MEG-only dataset (Cam-CAN), MEG-GPT generates realistic, synthetic MEG data that preserves spatio-spectral properties. Tests on zero-shot downstream tasks improved metrics across the board. Measuring the effectiveness of synthetic data isn’t always clear. The paper uses the inclusion of transient events and population variability as indicators of MEG-GPT’s robustness.

Decoding speech perception from non-invasive brain recordings

A contrastive learning approach to map MEG signal & heard audio signal into a shared embedding space. This approach led to a top-10 segment accuracy of 72.5%, compared to 19% with only EEG data. The analysis showed that the decoder primarily relied on lexical and contextual semantic representations within the MEG signal.

Brain-to-Text Decoding: A Non-invasive Approach via Typing

A study on typing performed by researchers at Meta, which included data collection within a large MEG system. Participants typed briefly memorized sentences. The model, Brain2Qwerty, was transformer-based. With MEG, Brain2Qwerty reaches, on average, a character-error rate of 32% and substantially outperforms EEG (CER: 67%). For the best participants, the model achieves a CER of 19%, and can perfectly decode a variety of sentences outside of the training set. It’s worth noting that 32% is nearly the CER needed for auto-correct systems to work effectively.

MEGFormer: enhancing speech decoding from brain activity through extended semantic representations

With a CNN + transformer architecture, researchers boosted speech decoding accuracy on standard MEG datasets from 69% to 83%. Longer speech fragments had the greatest accuracy gains, taking advantage of MEG’s high temporal resolution.

Brain decoding: toward real-time reconstruction of visual perception

Combined an MEG encoder with a diffusion model. Specific metrics weren’t given, but researchers boasted a 7x improvement in image retrieval tasks over the baseline methods. From a quick eye test on images generated at different post stimulus timestamps, we can see that MEG data contains information about semantic representations.

MEG-based brain-computer interface for hand-gesture decoding using deep learning

A deep learning model trained on MEG data during rock-paper-scissors gestures. The model outperformed SVM and EEG methods significantly and rivaled performance from invasive methods like ECoG. The paper noted that a subset of sensors was enough for high performance.

Convolutional neural networks decode finger movements in motor sequence learning from MEG data

A lightweight CNN was trained to decode individual finger movement from MEG data. This is known to be a difficult task for lower resolution modalities like EEG. This model achieved 82% accuracy for distinguishing right hand finger-level movement, showing significant gains.

Quantum sensors & OPM operation

OPMs are quantum sensors that measure small magnetic fields by exploiting the magnetic properties of alkali metal atoms (typically rubidium or cesium) contained in a heated vapor cell.

The process works as follows:

- A circularly polarized laser beam pumps the atoms into a specific spin state, aligning their magnetic moments in one direction.

- These aligned spins begin to precess (rotate) around any external magnetic field at a frequency proportional to the field strength. This is the Larmor frequency: f = γB. γ = the gyromagnetic ratio (varies by atom), B = magnetic field.

- External magnetic fields (like those from neural activity) cause the atomic spins to realign and shift their precession frequency. This is the Zeeman effect. The stronger the field, the faster the precession.

- The precessing atoms rotate the polarization of this laser in proportion to their frequency shift. By measuring the polarization rotation, we can calculate the magnetic field.

The vapor is heated until atoms collide so frequently that spin-exchange interactions average out rather than adding noise. This is the SERF (Spin-Exchange Relaxation-Free) regime. This only works in near-zero ambient fields, which is another reason OPMs require shielding. In SERF mode, sensitivities below 1 fT/√Hz are possible.

Magnetic shielding advances

Magnetic shielding blocks or redirects external magnetic fields to create a low-field environment for sensitive sensors. There are two approaches: passive and active.

Passive shielding uses high-permeability materials (like mu-metal) that attract and redirect magnetic field lines around the shielded space. These materials act like a magnetic sponge, absorbing external fields before they reach the sensor. Traditional magnetically shielded rooms (MSRs) use multiple layers of mu-metal and aluminum to achieve high field attenuation.

Active shielding uses sensors to measure the ambient field, and coils to generate a field that cancels it out in real time. Think noise-cancelling headphones for magnetic fields. The hard problem is generating uniform fields across the entire sensor array. If your cancellation field is uneven, some sensors will be over or undercompensated.

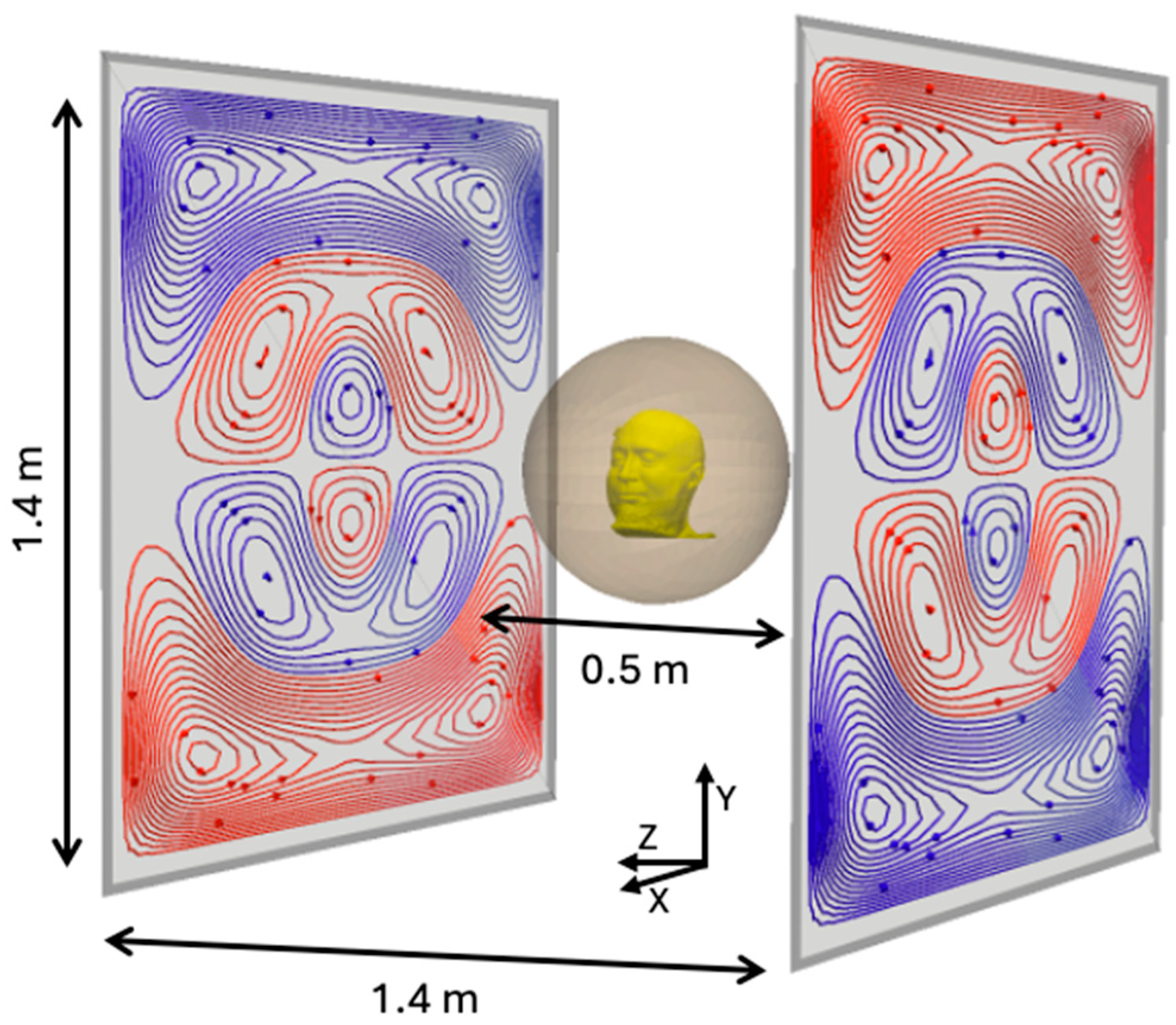

The latest advances in the active shielding space are biplanar PCB coils and matrix coils, both of which utilize clever coil geometries. Biplanar PCB coils use two parallel PCBs with precisely patterned copper traces to create highly uniform fields between them. Matrix coils take this further with multi-layer designs that can independently control different field components (x, y, z) and gradients. These systems measure the field at multiple reference points, compute the required cancellation currents in real time, and drive the coil array to null out the ambient field.

Active shielding doesn’t completely eliminate the need for passive shielding yet, but it reduces it from multi-layer mu-metal rooms to a single-layer enclosure, significantly dropping cost.

ADFMR operation

The magnetic sensing process for ADFMR sensors can be simplified into a few energy transfer steps:

- The input IDT generates a surface acoustic wave (SAW) that travels along the piezoelectric substrate.

- The SAW physically deforms the ferromagnetic lattice. Because the film is magnetoelastic, this strain rotates the magnetic moments. The resonance frequency of this interaction depends on the total ambient magnetic field, so any change in the field (like our neural signals) shifts how much SAW energy gets absorbed by the film.

- The output IDT measures the energy remaining in the SAW and converts it back to voltage. The difference in energy tells us the magnetic field.

The reason ADFMR has high dynamic range is that the SAW frequency is voltage-controlled. As the ambient magnetic field changes, you can tune the input frequency to keep the system operating near resonance, so the sensor never saturates. OPMs, by contrast, rely on atomic spin states that get disrupted in strong fields, which is why they need shielding to function.

The tradeoff is sensitivity. The energy transfer from SAW to magnetic rotation is lossy. The ferromagnetic film’s magnetoelastic coupling coefficient determines how efficiently mechanical strain converts to magnetic precession, and current films lose most of the energy in the process. Film damping and thickness uniformity compound the problem. This is where much of the magnitude gap between current pT performance and the fT levels lives.

ADFMR scaling details

ADFMR’s absorption properties make it well suited for array-based scaling. SAW power absorption increases exponentially with the length of the ferromagnetic film along the propagation direction, but only improves marginally with film thickness beyond ~20 nm (due to increased damping). This makes extending the film’s length the more efficient way to increase sensitivity. The optimal operating point for magnetic sensing is around 6 dB of absorption, which can be tuned by adjusting the film’s thickness & length. Because each sensor element can be independently designed to hit a target sensitivity, they can be tiled into arrays to boost overall performance.

The dominant source of ADFMR noise is thermal magnetic fluctuations in the ferromagnetic film. For a 1 cm x 1 cm x 100 nm nickel film, the Berkeley thesis calculates this at ~4.3 fT/√Hz, already near the clinical MEG range. With room-temperature sputtered Co25Fe75 films, the calculated noise floor drops to 0.63 fT/√Hz, competitive with the best SQUID and SERF magnetometers. Building a cobalt-iron film introduces material science problems that we haven’t solved yet.

An additional noise reduction strategy is on-chip gradiometry. The thesis proposes placing two independent ADFMR devices on a single chip sharing one large magnetic film. This gradiometric configuration rejects common noise sources (Earth’s field, power line interference,etc) without requiring magnetic shielding.

Nitrogen vacancy (NV) centers + ADFMR

NV centers are quantum defects in diamond lattices that function as extremely sensitive magnetic field sensors at room temperature. They can be optically initialized and read out. A laser excites the NV center, and the fluorescence intensity differs depending on the spin state. This makes NV centers natural candidates for high precision magnetometry.

The key result, demonstrated in the original ADFMR thesis from Berkeley, is that ADFMR devices can drive off-resonant coupling to NV centers without any applied external magnetic field. An input voltage drives a surface acoustic wave → the SAW strains the ferromagnetic film into resonance → the precessing magnetization couples into nearby NV centers, modulating their photoluminescence. The researchers deposited commercial NV centers directly onto ADFMR devices and observed strong coupling in both nickel and cobalt films.

This pairing can help us in two ways:

- To optimize readouts. Standard ADFMR is an electrical transmission measurement. You measure the difference in SAW power between input and output IDTs. This lacks spatial resolution. NV centers can probe with sub-micron precision. In the experiments, NV photoluminescence was used to map ADFMR absorption across the ferromagnetic film, revealing details that bulk electrical measurements miss.

- To boost sensitivity. NV centers achieve low pT/√Hz sensitivities on their own but typically require microwave antennas and external field sources for spin manipulation. ADFMR provides an on-chip, electrically controlled alternative. A hybrid ADFMR-NV sensor could combine ADFMR’s high dynamic range (enabling unshielded operation) with NV diamond's sensitivity.

This combined system is still early. The thesis demonstrated proof-of-concept coupling, not a working magnetometer. But the fact that strong NV coupling was observed at power levels orders of magnitude below traditional NV experiments, and at zero field, suggests this path has real potential. No group has yet built a dedicated ADFMR-NV hybrid sensor at high sensitivity.